Stalk

Table of Contents

Background

Developing a Kubernetes controller/operator can be a stressful experience. You have to come to terms with eventual consistency (i.e. “trust the cluster, it will work itself out eventually”), frequent retries and conflicts because you are not the only application that is running and might make modifications to Kubernetes resources (just because you created a ConfigMap does not mean some other, unknown to you application will try to modify that ConfigMap). Things get even more funky when your controllers begin to operator on multiple clusters at same time.

One of the biggest issues for me was having a mental trace of how my Kubernetes resources evolved over time. Which controller at what point made what modification to it? If a resource ends up stuck in deletion because of some finalizers, how did that happen exactly?

kubectl get can already be used to keep a tap on resources by using the --watch flag, but it’s limited and

annoying to use when you have to deal with a whole number of resources at the same time. Plus all you would

see from kubectl get --watch is the full resource, no matter how little was changed.

Stalk it!

stalk can help tremendously in these situations. Instead of writing the entire resources to stdout, stalk

keeps their latest version in memory and can then present you with a diff view. stalk allows you to hide

irrelevant things (managedFields, anyone?) or only show specific paths of your resources.

$ stalk --help

Usage of stalk:

-c, --context-lines int Number of context lines to show in diffs (default 3)

-w, --diff-by-line Compare entire lines and do not highlight changes within words

-h, --hide stringArray Path expression to hide in output (can be given multiple times)

--hide-managed Do not show managed fields (default true)

-j, --jsonpath string JSON path expression to transform the output (applied before the --show paths)

--kubeconfig string Kubeconfig file to use (uses $KUBECONFIG by default)

-l, --labels string Label-selector as an alternative to specifying resource names

-n, --namespace stringArray Kubernetes namespace to watch resources in (supports glob expression) (can be given multiple times)

-s, --show stringArray Path expression to include in output (can be given multiple times) (applied before the --hide paths)

-e, --show-empty Do not hide changes which would produce no diff because of --hide/--show/--jsonpath

-v, --verbose Enable more verbose output

-V, --version Show version info and exit immediately

Examples

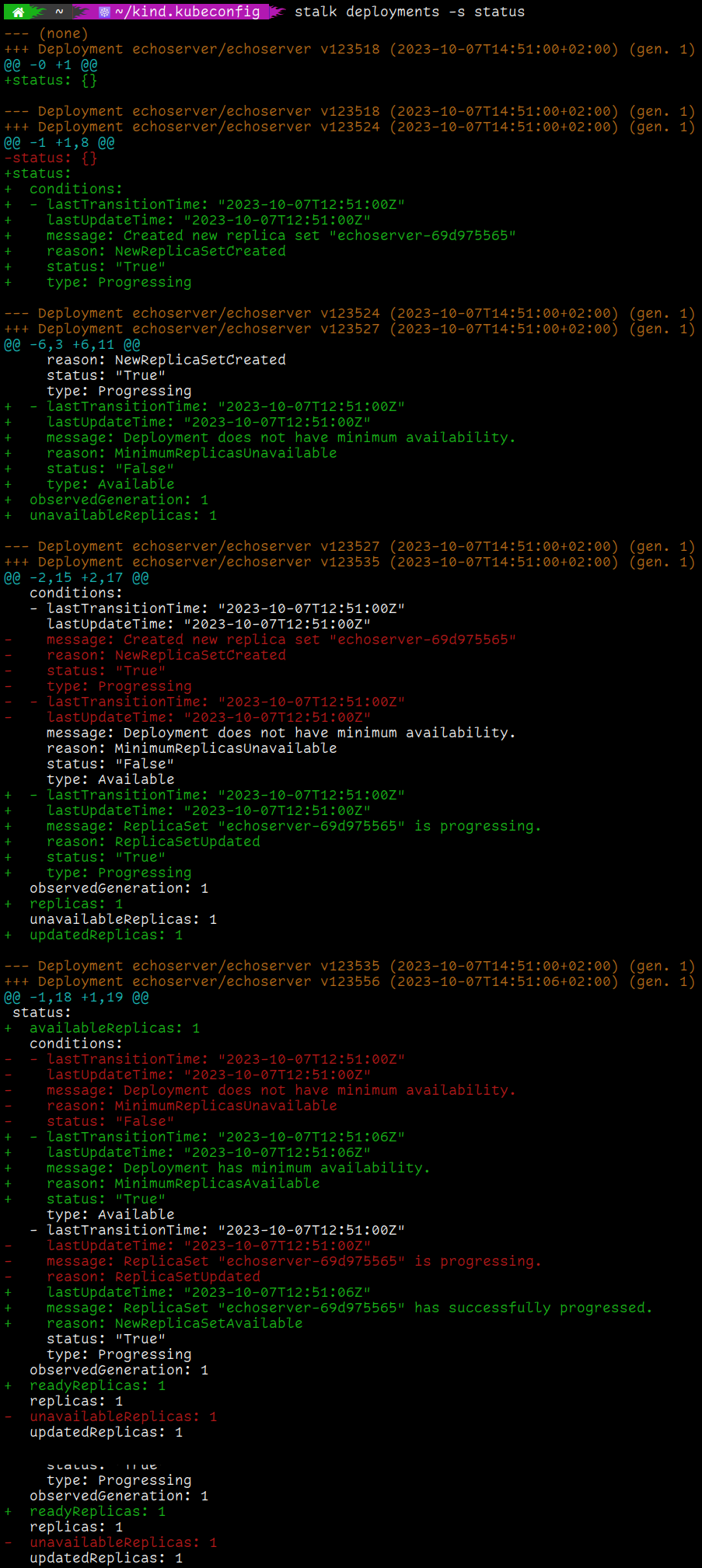

Ever wondered how a Deployment progresses?

A Kubernetes Deployment has fields like readyReplicas, replicas, availableReplicas etc. in its status.

But when you create a Deployment, how do these fields actually change over time as Pods are started/deleted?

stalk can tell you! The command below will stalk all Deployments in your cluster (in all namespaces) and

will only show the status subresource:

$ stalk deployments -s status

Find out why your changes won’t stick

Sometimes you try to edit a Kubernetes resource and your changes are seemingly lost/overwritten. The root cause

is usually some other controller that, for whatever reason, undoes your changes immediately. Sometimes you are

not even involved, but instead you have two or more components in your cluster that fight over the state of

a single resource (this should of course not happen in production systems). If you ever tried to quickly hammer

a kubectl get somethingsomething foo -o yaml over and over and over again and hoped to see the stray change,

then stalk can also help you. Just run…

$ stalk somethingsomething foo

and you can quickly see what’s going on.